|

8/19/2023 0 Comments Data2vec

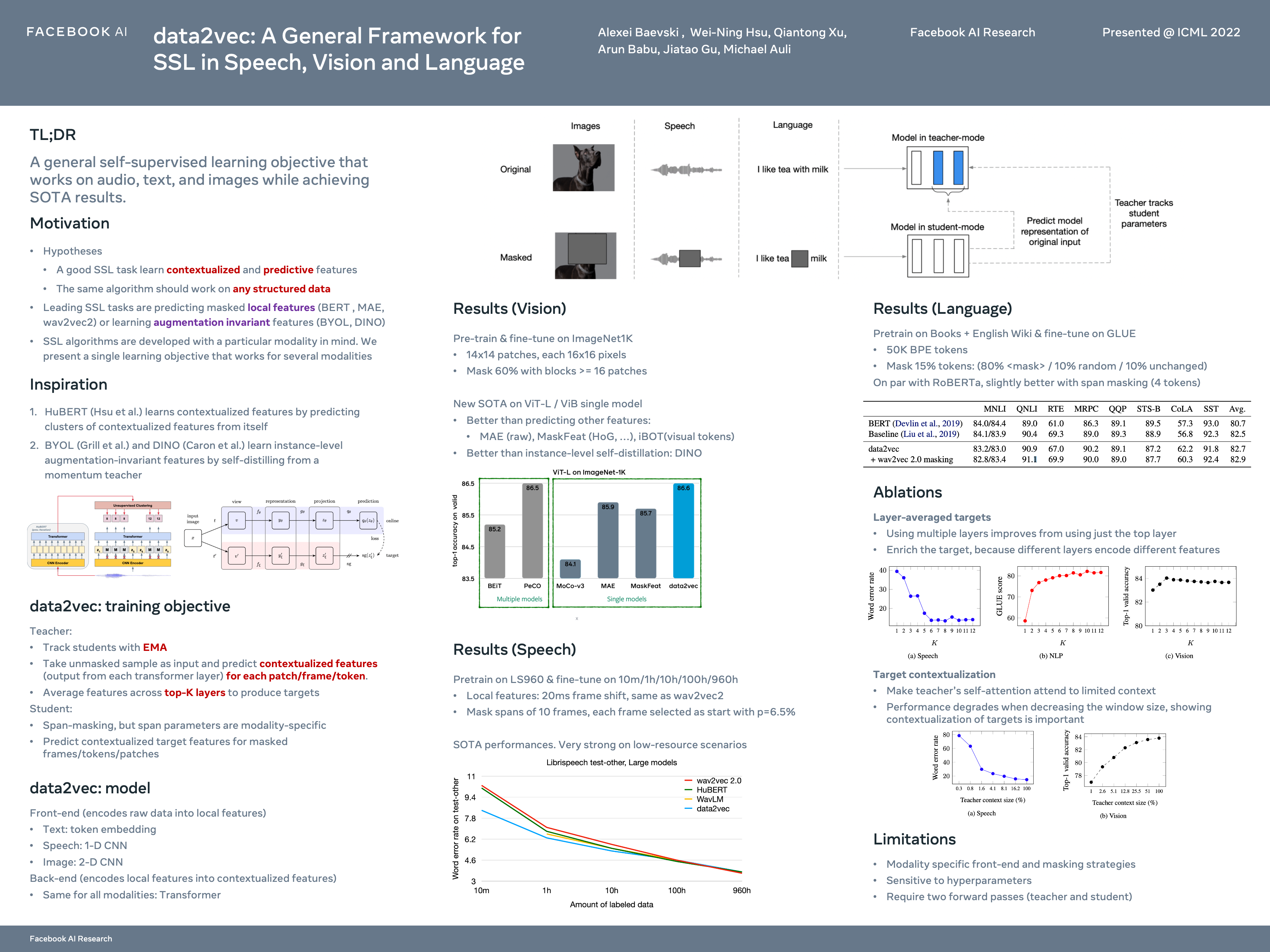

We also include variants such as ImageTextContrastiveLoss used in models like ALBEF.Ĭodebook layers which compresses high dimensional data by nearest neighbor lookup in an embedding space and is a vital component of VQVAEs (provided as a model in the repository). Commonly used function for training models like CLIP and FLAVA. Some examples include:Ĭontrastive Loss with Temperature. A collection of modular and composable building blocks like models, fusion layers, loss functions, datasets and utilities. In the repository, we provide:īuilding Blocks. TorchMultimodal is a PyTorch domain library for training multi-task multimodal models at scale. These should serve as starting points for ongoing/future research, as well as examples for using advanced features such as integrating with FSDP and activation checkpointing for scaling up model and batch sizes. These are designed to be modular, and can be easily extended to handle new modalities.Įnd-to-end examples for training and evaluating the latest models from research. TorchMultimodal solves this problem by providing:Ĭomposable and easy-to-use building blocks which researchers can use to accelerate model development and experimentation in their own workflows. While the PyTorch ecosystem has a rich repository of libraries and frameworks, it’s not always obvious how components from these interoperate with each other, or how they can be stitched together to build SoTA multimodal models. Generative models such as Make-a-video and Make-a-scene are redefining what modern AI systems can do.Īs interest in multimodal AI has grown, researchers are looking for tools and libraries to quickly experiment with ideas, and build on top of the latest research in the field. Recent work from FAIR such as FLAVA, Omnivore and data2vec have shown that multimodal models for understanding are competitive with unimodal counterparts, and in some cases are establishing the new state-of-the art. Interest is rising around AI models that understand multiple input types (text, images, videos and audio signals), and optionally use this understanding to generate different forms of outputs (sentences, pictures, videos). The library is under active development, and we’d love to hear your feedback! You can find more details on how to get started here. The library provides composable building blocks (modules, transforms, loss functions) to accelerate model development, SoTA model architectures (FLAVA, MDETR, Omnivore) from published research, training and evaluation scripts, as well as notebooks for exploring these models.

We are announcing TorchMultimodal Beta, a PyTorch domain library for training SoTA multi-task multimodal models at scale.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed